PhD Student at the Max Planck ETH Center for Learning Systems (CLS).

Thomas Wimmer

I am a doctoral student at the Max Planck ETH Center for Learning Systems (CLS) and ELLIS programs. My advisors are Jan Eric Lenssen, Bernt Schiele (MPI), and Siyu Tang (ETH).

I previously graduated from master’s degrees at the Technical University of Munich and the Institut Polytechnique de Paris. During my studies, I collaborated and worked in the groups of Daniel Cremers, Maks Ovsjanikov, Peter Wonka, and Federico Tombari.

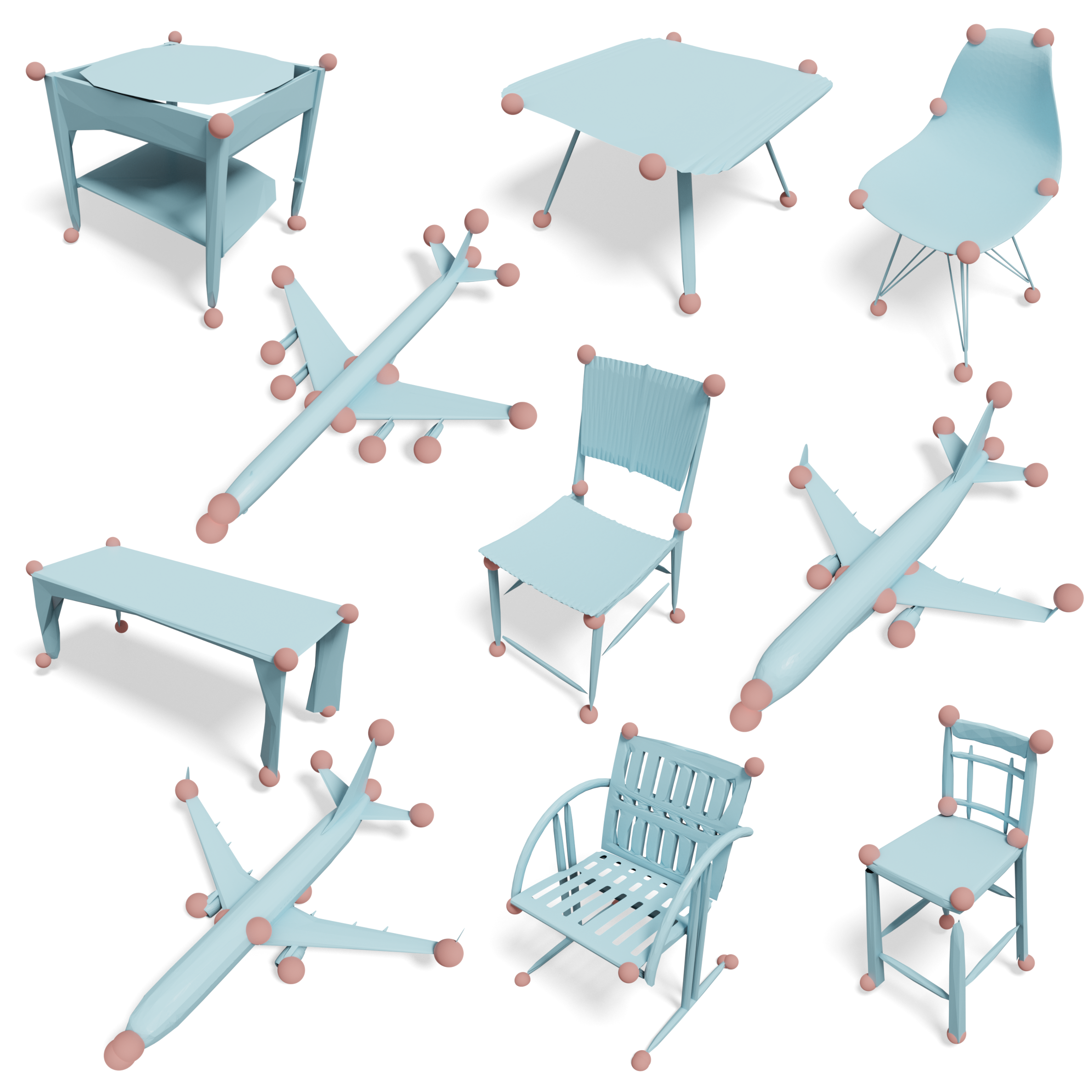

My main research interests are visual representation learning and 3D computer vision. However, I am always open to new ideas and collaborations in related fields. This website gives you an overview of my recent research and other projects.

I am currently spending time at Google Zurich as a Student Researcher.

Publications

Research papers and preprints

Blog

Notes and project write-ups

Contact

Get in touch with me

news

| May 10, 2026 | Excited to give a talk at the BLISS Speaker Series on June 16, 2026 at 7PM. My talk will be titled “Beyond Patches: Learning Dense Visual Features”. |

|---|---|

| May 10, 2026 | Happy to share that I will start a new position as Student Researcher at Google in Zurich in June 2026. |

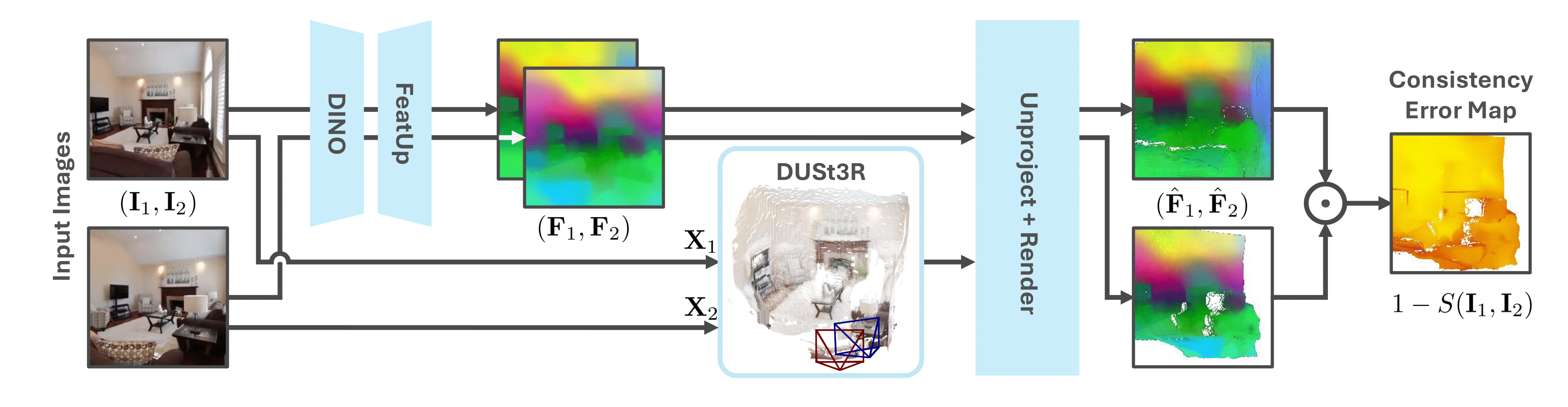

| Oct 14, 2025 | New pre-print: “AnyUp: Universal Feature Upsampling” is now available on arXiv! Super excited to share this work, where we propose a first-of-its-kind feature-agnostic upsampling architecture that can upsample features from any vision model at any resolution, without requiring any encoder-specific training. New state-of-the-art results on multiple downstream benchmarks, while being the first upsampler that naturally generalizes to different feature types at inference time. Accepted to ICLR 2026 as an oral presentation! |

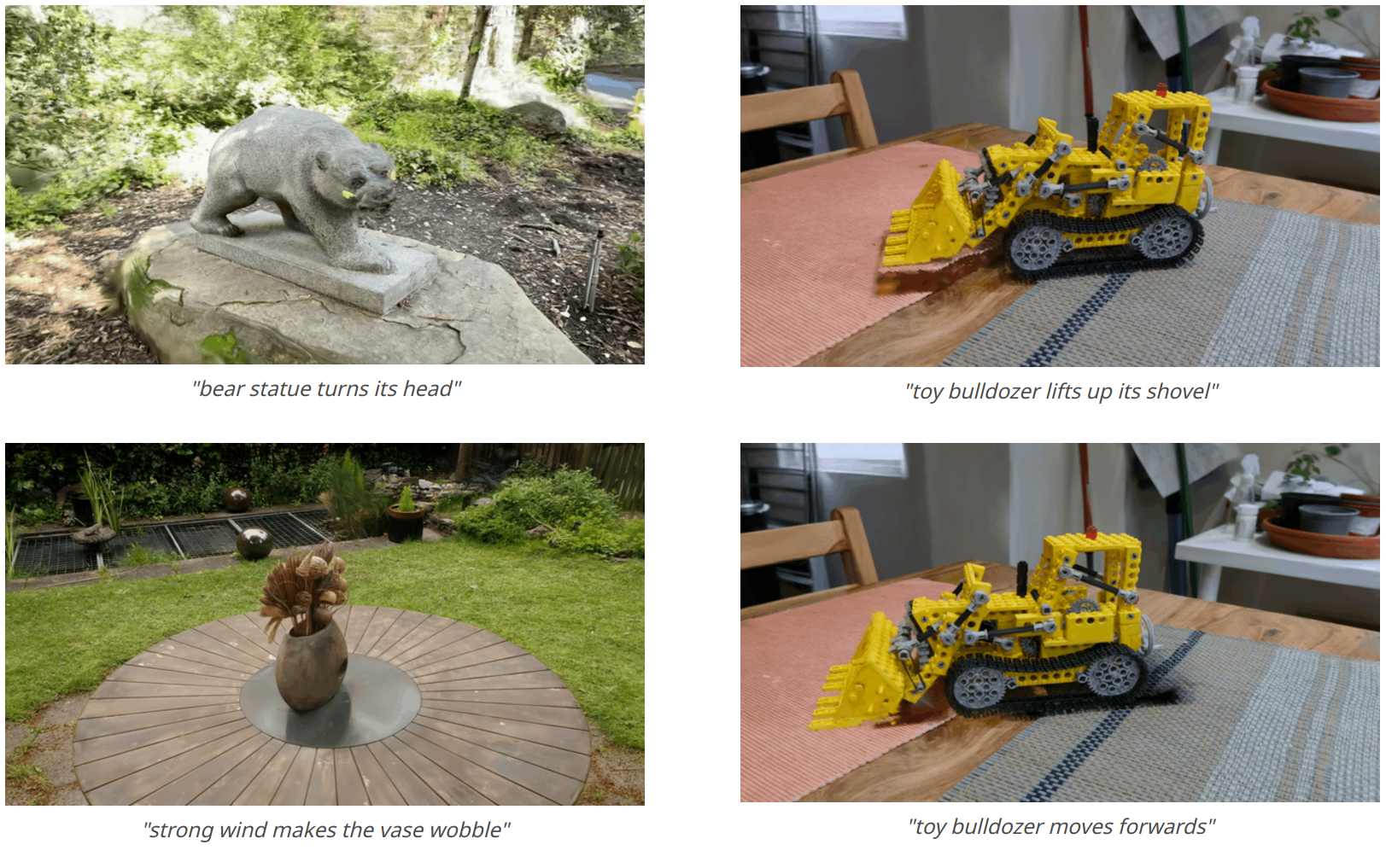

| Jun 05, 2025 | New pre-print: “Do It Yourself: Learning Semantic Correspondence from Pseudo-Labels” is now available on arXiv! We show that foundational features can be refined with an adapter that is trained with pseudo-labels, which are themselves zero-shot predictions using the same foundational features. We improve the quality of pseudo-labels through 3D-aware chaining with cycle-consistency and reject wrong pairs using a spherical prototype. New state-of-the-art results on SPair71k and scalable to larger datasets. Accepted to ICCV 2025! |